Asynchronous, Multithreading, and Multiprocessing.

Making your program do some multi-tasking

One weekend, as I usually do, I threw some clothes in the washer, set the timer for 30 minutes, and then proceeded to boil some water in the kitchen. While I scrubbed and mopped the floors, I left the water to boil. In addition to frequently checking the boiling water to make sure it was hot enough to make my cereal, I kept an ear out for when the washing machine would stop.

All these simultaneous activities reminded me of asynchronous programming. I also wished I could create another version of myself (process) to focus on specific parts of the work. Unfortunately, human beings are only capable of handling tasks asynchronously, unlike our computers which have more capabilities to do multiple activities more efficiently by spinning up new threads or new processes.

This article aims to demystify the three major approaches to achieving concurrency and parallelism techniques in a computer program:

Asynchronous programming

Multithreading

Multiprocessing

Asynchronous Programming

Asynchronous programming is a programming paradigm that allows tasks to run independently and concurrently. It allows the execution of multiple tasks without waiting for each one to complete execution before moving on to the next. Just like how I handled my weekend chores!

This means that tasks can be executed in a non-blocking pattern that efficiently handles I/O operations like network calls, reading files, or any other tasks that wait for some external resources, potentially maximizing the computer's resources.

To understand asynchronous programming better, think about the operation of a big restaurant with only one chef.

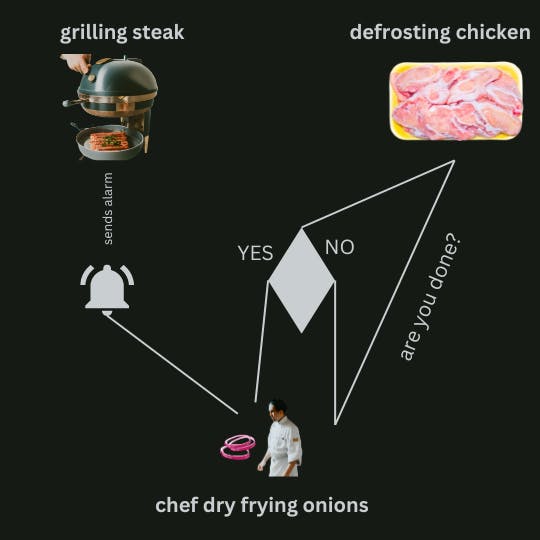

The chef continues to receive more orders and initiate the process of cooking. Within this cooking process, there will be some steps that are independent and will take some time like dry frying onions while waiting for the steak to finish grilling while also waiting for the frozen chicken to defrost.

The chef does not need to wait for the steak to finish grilling before dry frying the onions or wait for the chicken to defrost before grilling the steak. He simply sets a timer that rings some alarm to notify him of the completed grilling task and he checks on the defrosting chicken from time to time to see if all the ice is melted.

The chef has saved more time by setting some time-consuming tasks in motion and proceeding with the other steps in preparing the meal.

Just as the chef has the griller's timer and checks on the chicken, so does asynchronous programming use callbacks, promises, async/await syntax, and event loops to monitor the progress and status of asynchronous tasks.

The implementation of asynchronous programming varies across different programming languages. However, the overall goal is the same:

Allowing a single thread to run multiple tasks concurrently without blocking the flow of execution on the thread.

If you don't understand the concept of threads, don't worry, we are going to discuss threads in the next section. What I am trying to establish with the highlight above is that asynchronous programming happens over a single thread.

To understand the next two concepts, Multithreading and Multiprocessing, we must first understand their underlying concept: Threads and Processes.

Threads and Processes

You definitely know we're not sewing clothes here, we are talking about threads in a computer's CPU. Threads are lightweight units of execution within a process. They enable the concurrent execution of multiple tasks within a single program thereby, allowing improved performance and responsiveness.

The good thing about working with threads is that they share the same memory space and resources of the parent process, which means they can directly access variables and data structures within that process.

What then is a process? A collection of threads?

While a thread is a unit of execution within a process, a process is an instance of a program running on a computer. When a program is executed, it typically spawns one or more processes in the CPU. Each process represents the execution of that program as a separate entity. Processes are isolated from each other, meaning they cannot directly access the memory or resources of other processes unless specific mechanisms are in place for inter-process communication.

Combining the definition of both terms we can say that the following is true:

A program executes in the computer by spawning one or more processes. Each of those processes utilize one or more threads for every unit of execution within that process.

Now that we understand threads and processes, Let's consider the second approach to multi-tasking in computer programs; Multithreading.

Multithreading

As the name implies, multithreading involves dividing a program to utilize more than one thread. It uses multiple threads to concurrently perform different parts of its execution on independent threads within a single process. Programmers use multithreading because threads are more lightweight, compared to processes.

A big bonus to threads is that they share the same memory space with the parent process, hence, making communication between them more efficient.

That said, let's head back into the kitchen to see how the multithreading concept might improve our dish-processing efficiency.

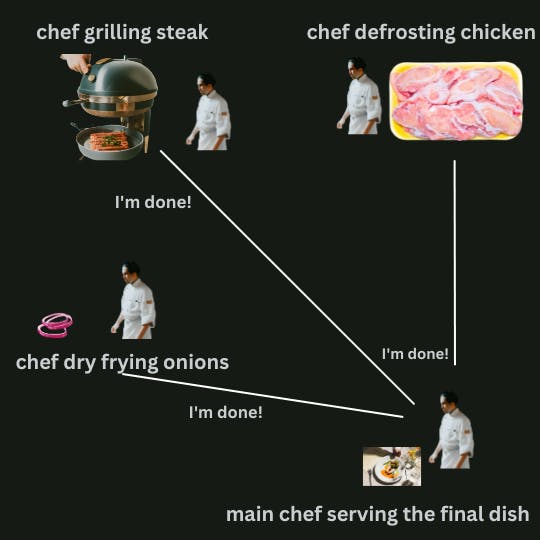

The restaurant is growing, the Chef begins to realize that just him running the kitchen alone is not very efficient and customers are complaining. He decides to hire 3 more chefs to help him out. He becomes the "main chef" and has now hired 3 assistant chefs.

The main chef is still very much in charge of the kitchen but now he can direct each assistant chef to focus on one activity. This allows more monitoring of that activity and reduces inaccuracy since only one chef can be in charge of grilling the steak, another can be in charge of chopping the vegetables, and another plating the dishes.

Communication is seamless as all the chefs are present in the same kitchen and they can easily share the same ingredients and equipment, improving the capacity of the kitchen to deliver customer's orders more timely.

Multithreading shines in applications and scenarios that can be divided into smaller, independent tasks that can run in parallel. It allows programs to efficiently take advantage of multicore processors. This is possible because different threads can be assigned to different cores, enabling true parallel execution.

Communicating over multiple threads has amazing benefits as we have seen and highlighted. However, there are certain challenges that multithreading introduces due to the flow of data between multiple threads. Programs that take advantage of multithreading need to also consider proper thread synchronization, using thread pools, and potential data race conditions in order to avoid cases like data corruption or deadlocks. This is especially true for languages like Go and Java that implement built-in concurrency features.

Multiprocessing

Multiprocessing takes parallelism a step further as programs with highly-computational tasks utilize multiple processes to execute tasks simultaneously. Unlike threads, processes are independent and have their own memory space. The isolation between processes makes them more robust and stable.

Unlike the data corruption and deadlock-related issues present in multithreading, when one of the processes crashes, it does not affect the other running processes as they are not operating in the same memory space. Each process focuses on one specific highly-computational task that requires optimal maximization of the CPU resources.

We can pay a visit again to our favourite restaurant and see how multiprocessing works for them. Imagine the restaurant kept growing and customers have been requesting a wider menu, not just grilled steak and chicken anymore.

The Main Chef tries to hire much more assistant chefs to handle different dishes on the menu but operating and managing more chefs in this small kitchen would be disastrous. So he decides to build more kitchens!

Where each Kitchen is dedicated to making specific dishes. In every kitchen, there's a team of chefs and all the resources, ingredients, and equipment that they need to finish their dishes. To allow the kitchens to communicate and send data with each other, the Main Chef also hires some clerks that run errands like sending finished side dishes from one kitchen to another.

Multiprocessing is great for CPU-bound tasks like Video Editing, Image Processing and Editing, Gaming, etc. The CPU's power also determines how fast a Multiprocessing program would run.

However, the lack of an "out-of-the-box" communication feature in processes makes it a bit more difficult to configure a means to allow processes to communicate with each other. Programmers have to implement inter-process mechanisms like pipes and shared memory to allow processes to exchange data and coordinate their activities.

Comparisons, Strengths, and Trade-Offs

Choosing the best approach between Asynchronous programming, Multithreading, and Multiprocessing is ultimately based on the specific requirements of the program. They all offer unique advantages and considerations, allowing for efficient utilization of system resources and enhanced performance.

Asynchronous programming allows for non-blocking and concurrent execution, particularly beneficial in scenarios involving I/O operations or tasks dependent on external resources. It enables programs to remain responsive and maximizes resource utilization by efficiently handling multiple tasks concurrently.

Multithreading facilitates concurrent execution within a single process, dividing the workload into lightweight threads. It's great for tasks like running Webservers, Data Analysis, and Parallel Algorithms.

When a program has to handle context switching more effectively, the majority of programmers will choose asynchronous programming over multithreading. This is so that data racing can be avoided because asynchronous functions do not access the same data structure from separate threads. Also, it is possible because the asynchronous execution does not have to actively monitor every thread and wait for them to complete their execution when the functions are idle. Resources are allotted to other functions that can continue their execution if any function in an asynchronous execution experiences idle or wait time.

Multiprocessing takes parallelism to the next level by employing multiple processes to execute tasks simultaneously. Each process operates independently with its own memory space, offering robustness and stability. Multiprocessing is ideal for computationally intensive tasks that require efficient utilization of available hardware resources. Such as scientific calculations, Genetic Algorithms, and Distributed Algorithms.

However, it introduces more complexity. For example, the communication overhead of Inter-Process Communication (IPC) incurs additional computational and memory costs. The same is true for the resources required for its computation, difficulty in load balancing, lack of exception sharing, and its context switching effort.

Multiprocessing still remains a powerful efficiency tool. With proper design, careful consideration of resource allocation, and effective inter-process communication, multiprocessing can enable significant performance improvements in computationally intensive tasks.

Making The Right Decision:

Knowing the different approaches is one thing, deciding on which to choose is another! As said earlier, it is ultimately dependent on the task at hand. When I need to decide on which of these patterns to adopt in my program, I follow this pseudocode:

if io_bound:

if io_very_slow:

print("Use Async")

else:

print("Use Multithread")

else:

# i.e CPU-bound or memory-bound

print("Use MultiProcess")

CPU Bound => Multi Processing

I/O Bound, Fast I/O, Limited Number of Connections => Multi Threading

I/O Bound, Slow I/O, Many connections => Asyncio